Key Takeaways

- Hardware-Backed Isolation: Titanium Intelligence Enclaves (TIE) physically isolate data processing, preventing even Google engineers from accessing it.

- Verifiable Privacy: Remote attestation allows user devices to verify the integrity of the enclave before sending any data.

- Ephemeral Processing: All data and computations are immediately discarded after the session, leaving no trace.

- Enterprise Ready: Solves the “black box” problem for businesses hesitant to upload sensitive data to public AI clouds.

In the race to dominate artificial intelligence, a critical bottleneck has emerged: trust. For years, enterprises and privacy-conscious users have faced a difficult choice—upload sensitive data to the cloud to access powerful models like Gemini, or keep data local and settle for less capable, on-device models. The fear of data leaks, whether through malicious hacks or inadvertent training on user data, has been a major barrier to adoption.

Google’s answer to this dilemma is Private AI Compute, a new cloud architecture launched in November 2025. By leveraging specialized hardware known as Titanium Intelligence Enclaves (TIE), Google claims to have solved the privacy paradox, offering the immense power of cloud-based AI with the data security guarantees of a local device.

This deep dive explores the technical architecture of Private AI Compute, how Titanium Intelligence Enclaves work, and what this shift means for the future of secure cloud computing.

Background: The Path to Privacy

The Privacy Paradox

As Generative AI Models grew in size—from billions to trillions of parameters—running them locally became increasingly difficult. While companies like Apple pushed for on-device processing, the most capable models still required the massive computational resources of data centers. This created a “trust gap”: users had to blindly trust cloud providers not to peek at their data.

The Rise of Confidential Computing

The industry’s initial response was “Confidential Computing,” a set of technologies designed to encrypt data while it’s being processed (data-in-use). However, early implementations were often performance-constrained or limited to specific CPU architectures.

Google’s Titanium Evolution

Google has been developing its custom silicon for years. The “Titanium” project originally focused on offloading infrastructure tasks (like networking and security) from the main host CPU to dedicated cards. With Private AI Compute, Google has repurposed and evolved this architecture specifically for the unique demands of AI workloads.

Understanding Private AI Compute

Private AI Compute is not just a software feature; it is a fundamental re-architecture of how AI workloads are processed in Google’s data centers. It combines custom hardware, cryptographic protocols, and a minimized trusted computing base (TCB) to create a “clean room” for AI.

How It Works

The process follows a strict “verify-then-trust” model:

- Remote Attestation: Before your device sends a single byte of data, it challenges the cloud server. The server must prove it is running genuine, unmodified Google hardware and software inside a Titanium Enclave.

- Secure Tunnel: Once verified, your device establishes an encrypted tunnel (using protocols like Noise) directly to the enclave.

- Isolated Processing: Data enters the enclave, is processed by the AI model on custom TPUs, and the response is encrypted and sent back.

- Immediate Destruction: As soon as the inference is complete, the enclave wipes the data from memory. No logs are kept, and the data is never written to disk.

Why It Matters

This architecture ensures that no human, not even a Google administrator with root access, can view the data inside the enclave. The cryptographic keys are held within the hardware itself, making physical extraction or software-based snooping mathematically infeasible.

Understanding Titanium Intelligence Enclaves (TIE)

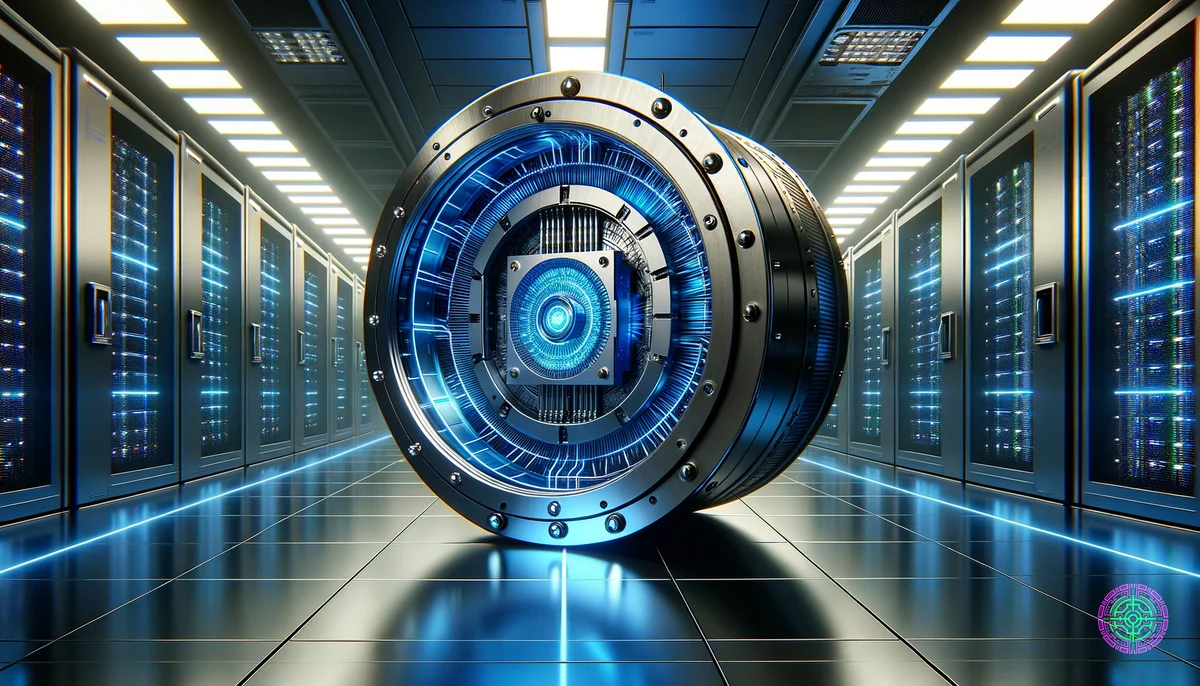

The heart of this system is the Titanium Intelligence Enclave. Think of it as a digital vault inside the data center.

Technical Specifications

- Hardware Root of Trust: The security is anchored in a custom Titan security chip that validates the boot process.

- Memory Encryption: All data in memory is encrypted with keys that are generated inside the processor and never leave it.

- Peripheral Isolation: The TIE isolates the AI accelerators (TPUs) from the host machine. The host manages the scheduling but cannot see the payload.

Defense in Depth

Google has implemented multiple layers of defense:

- IP Blinding: Requests are routed through third-party relays to hide the user’s IP address from Google’s internal logs.

- Binary Transparency: The software running in the enclave is published to a transparency log, allowing independent researchers to audit the code.

- Intrusion Detection: Custom motherboards feature physical intrusion detection to prevent tampering.

The Data

The shift to Private AI Compute is driven by market demand for security.

Key Statistics:

- $100 Billion+: The estimated investment in AI infrastructure in late 2025, with a significant portion dedicated to secure compute.

- 220 Million: OpenAI’s projected paying user base, highlighting the scale of cloud AI usage that Google aims to capture with superior privacy.

- Zero: The number of persistent logs Google claims to retain from Private AI Compute sessions.

Industry Impact

Impact on Enterprise

For sectors like healthcare, finance, and legal, this is a game-changer. Hospitals can now potentially use state-of-the-art diagnostic AI models on patient data without violating HIPAA, as the data technically never becomes “visible” to the cloud provider.

Impact on Consumers

For everyday users, this powers features like the new “Scam Detection” on Pixel phones. While the detection happens on-device for speed, complex cases can be offloaded to the cloud for deeper analysis without the user worrying that their private calls are being recorded or analyzed for ads.

Challenges & Limitations

Despite the robust architecture, no system is perfect.

- Performance Overhead: The encryption and attestation processes introduce a small amount of latency. While Google claims this is negligible, real-world testing on poor connections may vary.

- Hardware Dependency: This solution is tightly coupled with Google’s custom hardware (TPUs and Titanium). It is not easily portable to other clouds or on-premise data centers.

- Trusting the Silicon: Ultimately, users still have to trust that Google’s hardware design is flawless. While “verifiable,” the complexity of modern chips means hidden hardware bugs are always a theoretical risk.

What’s Next?

Short-Term (1-2 years)

Expect to see “Private Compute” become the standard tier for all enterprise AI services. Competitors like AWS (Nitro Enclaves) and Azure (Confidential Computing) will likely accelerate their own specialized AI hardware offerings to match Google’s TIE.

Long-Term (5+ years)

The industry may move towards “Homomorphic Encryption,” where data can be processed while it remains fully encrypted, eliminating the need to ever decrypt it, even inside an enclave. Until then, hardware-based isolation is the gold standard.

The Bottom Line

Google’s Private AI Compute represents a maturing of the AI industry. The market is moving past the “move fast and break things” era into a phase where security and privacy are foundational. By using Titanium Intelligence Enclaves to physically and cryptographically isolate user data, Google is attempting to prove that you don’t have to sacrifice privacy for intelligence. For the first time, the cloud might actually be as secure as the device in your pocket.

🦋 Discussion on Bluesky

Discuss on Bluesky