Key Takeaways

- The Number: $50 Billion. That is the total investment Anthropic is reportedly seeking for its 2026 infrastructure buildout.

- The Locations: Massive new data center campuses are breaking ground in Texas, New Mexico, and New York.

- The Jobs: The project will create 2,400 construction jobs and 800 permanent high-tech roles.

- The Partners: A strategic triad with Microsoft and NVIDIA ensures Anthropic has the chips and the cloud to scale.

In the world of AI, code is king, but compute is the kingdom. And Anthropic just bought a very large kingdom.

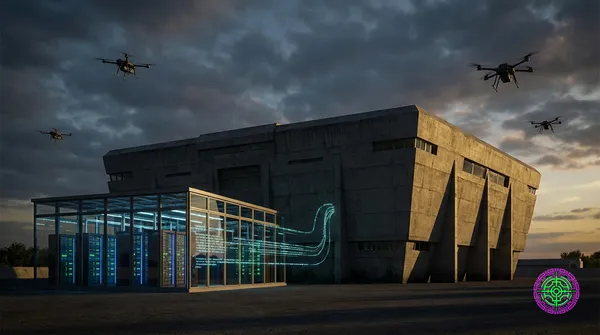

On November 12, 2025, the company announced a staggering $50 billion investment to build out American AI infrastructure. This isn’t just about renting servers; it’s about pouring concrete, laying fiber, and installing gigawatts of power. It is one of the largest private infrastructure projects of the decade, signaling that Anthropic is done playing the underdog.

The Master Plan: Texas, New Mexico, and Beyond

Anthropic is partnering with UK-based Fluidstack to develop these custom facilities. The choice of location is strategic:

- Texas & New Mexico: Chosen for their abundant land and, crucially, access to renewable energy (solar and wind).

- New York: Likely a strategic hub for financial latency and talent access.

These aren’t your average server farms. They are next-generation “AI Factories” designed specifically to handle the thermal and power density of NVIDIA’s Blackwell and Rubin chips. The facilities are expected to come online throughout 2026.

The Strategic Triad: Anthropic, Microsoft, NVIDIA

Perhaps more interesting than the buildings are the handshakes behind them. Anthropic has solidified a massive alliance:

- Microsoft: Anthropic has committed to purchasing $30 billion of Azure compute capacity. In return, Claude is now deeply integrated into Microsoft Foundry and Microsoft 365 Copilot. This is a pragmatic move: Microsoft gets a hedge against OpenAI, and Anthropic gets access to the world’s best enterprise distribution channel.

- NVIDIA: The hardware powering this $50B expansion is, of course, Green Team silicon. The partnership ensures Anthropic gets priority allocation of the latest GPUs, a critical advantage in a supply-constrained market.

Why Now? The “Compute Wall”

Why spend $50 billion? Because the industry is hitting the “Compute Wall.”

Current models like Claude 3.5 and GPT-4 were trained on clusters costing hundreds of millions. The next generation (Claude 5, GPT-6) will require clusters costing tens of billions.

- Data Scarcity: High-quality text data is running out. The next frontier is synthetic reasoning; generating billions of chains of thought to train the model on logic rather than just patterns.

Anthropic is betting that the only way to stay in the game is to own the infrastructure that powers it.

Economic & Political Impact

”Made in America” AI

This investment aligns with the administration’s push for “Sovereign AI.” By keeping the physical infrastructure on U.S. soil, Anthropic is positioning itself as a “National Champion”, a safe, American alternative to foreign AI development.

The Energy Question

The elephant in the room is power. These data centers will consume gigawatts of electricity. Anthropic has pledged to prioritize renewable energy, but the sheer scale of demand will put immense pressure on local grids. Expect “AI vs. Grid” to be a major political headline in 2026.

The Bottom Line

For a long time, Anthropic was seen as the “research lab”—the quiet, safety-focused alternative to OpenAI’s product juggernaut. With this $50 billion check, that narrative is dead. Anthropic is now an industrial titan. They aren’t just building a chatbot; they are building the physical backbone of the intelligence age.

🦋 Discussion on Bluesky

Discuss on Bluesky