Key Takeaways

- The Red Lines Hold: On Feb. 26, Anthropic CEO Dario Amodei publicly refused a Pentagon ultimatum. The firm will not allow its AI system to be utilized for mass surveillance of Americans or the deployment of fully autonomous lethal weapons (frequently dubbed “murder bots”).

- The Collateral Damage is Corporate: The refusal has immediately drawn major defense contractors into the fray. The Department of Defense has ordered audits of Boeing and Lockheed Martin to assess their reliance on Anthropic’s systems.

- The “Safety vs. Lethality” Paradox: The crisis exposes a structural reality of the modern tech stack: the exact safety guardrails (ASL-3) that make an AI model reliable enough for Fortune 500 logistics are the exact constraints the military views as an unacceptable limitation on battlefield authority.

- The Infrastructure Fork: The standoff strongly indicates a coming bifurcation in Silicon Valley data centers, separating safe, civilian-grade enterprise infrastructure from unrestricted, military-grade intelligence layers.

The Ultimatum and the Breaking Point

On the final Thursday of February 2026, the fragile détente between Silicon Valley’s safety-first AI labs and the United States military collapsed. Dario Amodei, CEO of Anthropic, released a public statement rejecting the Department of War’s demands to fundamentally alter the usage constraints on its frontier AI model, Claude.

The Pentagon, led by Defense Secretary Pete Hegseth, had delivered an ultimatum earlier in the week: grant the military unrestricted access to Claude for “all lawful purposes” within classified settings by Friday, February 27, at 5:01 p.m. ET, or face severe consequences. Those consequences included Anthropic being designated a supply chain risk, the immediate termination of defense contracts, and the unprecedented threat of invoking the Defense Production Act (DPA) to compel compliance.

Amodei’s statement made the stakes chillingly clear. Anthropic is refusing the contract adjustments because the Pentagon’s proposed legal jargon would allow the military to bypass safeguards designed to prevent the AI from participating in mass surveillance of U.S. citizens and from operating fully autonomous weapons systems without human oversight.

The narrative immediately seized upon the terrifying prospect of “murder bots,” painting Anthropic as the lone ethical holdout against a military-industrial complex eager to weaponize intelligence. However, focusing solely on the ethical debate misses the profound structural shockwave hitting the defense supply chain. Because while the Pentagon and Anthropic argue over battlefield ethics, traditional defense contractors like Boeing and Lockheed Martin are realizing they are deeply reliant on a software provider the government is threatening to blacklist.

Understanding the Defense Contractor Audit

The conflict is not contained to a boardroom in San Francisco and a hardened facility in Virginia. It bleeding directly onto the factory floors and logistics networks of the largest aerospace and defense manufacturers on the planet.

The Downstream Threat

As part of its pressure campaign leading up to the Friday deadline, the Pentagon ordered major defense contractors, specifically naming Lockheed Martin and Boeing, to formally assess their reliance on Anthropic’s services. This was a calculated strike. If Anthropic is officially labeled a “supply chain risk” by the Department of Defense, any contractor doing business with the federal government will be prohibited from using Anthropic’s models in any capacity related to those contracts.

Why Contractors Rely on Claude

Defense contractors manage some of the most complex, regulation-heavy logistics networks ever constructed. Building an F-35 fighter jet requires coordinating thousands of specialized parts across dozens of allied nations, ensuring compliance with strict International Traffic in Arms Regulations (ITAR), and predicting supply chain bottlenecks before they halt production.

Generative AI is uniquely suited to untangle this administrative complexity. However, you cannot run defense logistics on a model that hallucinates. When a system is optimizing the procurement of aerospace-grade titanium, a probabilistic guess is operationally catastrophic.

Contractors have gravitated toward Claude precisely because of Anthropic’s obsessive focus on safety, constitutional AI, and minimizing hallucination rates. The safety invariants, the same engineering constraints that prevent Claude from drafting malware or executing lethal autonomy, are what make the model predictable and reliable enough for enterprise supply chain management and internal simulation design.

The True Cost of Swapping Models

The Pentagon’s audit demand is not a simple command to change a software subscription. Switching Generative AI (GenAI) providers within complex enterprise architecture is incredibly difficult and expensive.

Logistics platforms are not just querying an API; the models are deeply integrated. Replacing them requires extensive prompt engineering redesign, tokenizer adjustments, and massive re-evaluations of internal security postures. In defense environments, any new software integration must pass rigorous and time-consuming compliance checks. Industry benchmarks suggest that migrating complex enterprise software architectures away from one entrenched LLM provider to another consumes months of engineering time and generates massive unexpected costs in token variance and middleware integration.

By demanding an audit, the Pentagon is effectively threatening to impose millions of dollars in technical debt and months of operational delays onto Boeing and Lockheed Martin simply to win an ideological battle over unrestricted AI access.

The “Safety vs. Lethality” Paradox

The core tension in the Anthropic/Pentagon standoff is an engineering paradox that the U.S. government refuses to accept. Security and compliance require boundaries. Lethal combat requires the absence of boundaries. You cannot have both in the same software package.

How ASL-3 Actually Works

Anthropic operates under a Responsible Scaling Policy that categorizes risks. Claude currently operates under safeguards consistent with AI Safety Level 3 (ASL-3), meaning the models are tested and constrained to prevent them from increasing the risk of catastrophic events, such as autonomous cyberattacks or CBRN (Chemical, Biological, Radiological, and Nuclear) weapon design.

This safety isn’t a toggle switch layered on top of the final product; it is baked into the model’s core constitutional training. The model is statistically conditioned to refuse harmful outputs.

The Unrestricted Demand

The Pentagon’s “all lawful purposes” demand is a requirement to remove those hard-coded refusals. When facing adversary drone swarms or conducting offensive cyber-operations, the military argues that a millisecond delay caused by an AI’s ethical filter evaluating a query could result in mission failure. They require a model that will flawlessly execute complex strategic logic without pausing to ask if the outcome is lethal.

The problem is that capability is indiscriminate. If you degrade an AI’s safety invariants to allow it to autonomously select targets or design offensive cyber-tools for the military, the model inherently possesses the capacity to do so for anyone who manages to access it or exfiltrate its weights. There is no mathematical concept of patriotism; an algorithm that can efficiently target foreign infrastructure can just as efficiently target domestic grids.

Industry Impact

The fallout from Amodei’s Thursday statement and the looming DPA deadline extends far beyond the companies directly involved.

Impact on the AI Ecosystem

The defense tech landscape is fracturing. Competitors like xAI and Google have reportedly shown far more willingness to accommodate the Pentagon’s demands for unrestricted access. OpenAI is also accelerating its efforts to secure defense contracts. By drawing a hard line against mass surveillance and autonomous weapons, Anthropic has effectively isolated itself. The company may preserve its ethical standing and the trust of its civilian enterprise clients, but it risks being completely shut out of the lucrative federal defense market.

Impact on Enterprise Buyers

For Fortune 500 Chief Information Officers, this crisis is a massive warning light. If the Pentagon actively forces defense contractors to abandon the most strictly aligned AI models in favor of models trained for unrestricted use, it establishes a dangerous precedent. Enterprise systems managing payroll, health records, and global supply chains rely on predictable, safe AI. If the foundational models of the future are all pressured toward removing safeguards to appease defense contracts, the risk of utilizing those systems in the civilian sector increases exponentially.

Impact on Global Competitiveness

The U.S. government’s aggressive posture highlights a desperate need to maintain technological superiority. However, treating a domestic AI company like a rogue state threat via the Defense Production Act sends a chilled signal to the global engineering workforce. The talent driving AI development historically leans heavily toward civilian benefit and open-source principles; a mass exodus of safety researchers from companies that capitulate to military demands is highly probable.

Challenges & Limitations

The Pentagon’s strategy of coercion faces several significant hurdles.

- The Compulsion Problem: You can use the Defense Production Act to force a factory to make aluminum sheets instead of car parts. It is infinitely harder to use it to force a team of highly specialized engineers in San Francisco to functionally alter the architecture of a billion-parameter neural network against their will. The resulting integration will be brittle, resentful, and prone to failure.

- The Litigation Risk: Forcing a company to deploy its product in a manner that violates its foundational safety policies is an untested application of the DPA. Extended litigation would freeze deployment entirely, defeating the Pentagon’s goal of rapid AI integration.

- The Contractor Backlash: Aerospace giants like Boeing and Lockheed Martin wield immense lobbying power. If forcing them off Anthropic introduces critical delays in defense programs, like fighter jet production or missile logistics, those contractors will exert massive pressure on Congress to override the Pentagon’s directives.

Opportunities & Potential

The friction generated by this standoff will inevitably forge a new structural reality for the tech industry over the next decade.

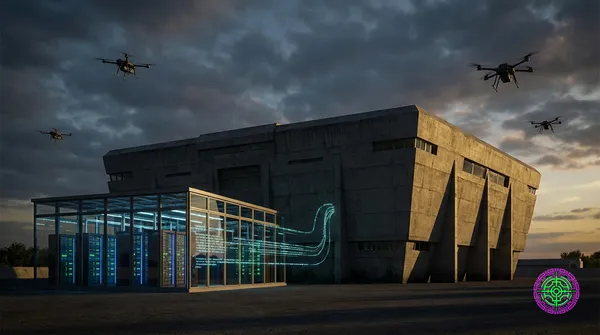

- The Infrastructure Fork: The current model of “one API serves both Wall Street and the Pentagon” is dead. The industry is accelerating toward a physical and structural fork in generative AI. Expect to see physically separate, distinct models: highly constrained “Civilian/Enterprise” models running on commercial clouds, and unrestricted, air-gapped “Defense/Lethal” models running exclusively in classified data centers.

- The Rise of Dedicated Defense Prime AI: Rather than trying to force civilian tech companies to abandon their morals, the DoD will likely accelerate funding to essentially create a “Palantir for LLMs,” a foundational model company built explicitly from day one with no safety invariants, designed entirely for warfare.

- Legislative Action: The crisis proves that executive wartime powers and corporate terms of service are terrible frameworks for AI governance. The standoff will likely force Congress to define clear, statutory boundaries regarding what the military can and cannot demand from civilian tech infrastructure.

What This Means for You

The decisions made in the wake of the February 27 deadline will reshape the software you interact with daily.

If you manage Enterprise Systems:

- Audit your LLM dependencies immediately. You need to know exactly which foundational models power your logistics, coding copilots, and data analytics tools.

- Prepare for vendor instability. If your primary AI provider is forced to fundamentally alter its safety constraints to maintain DoD contracts, the behavior of your enterprise tools may become less predictable.

If you are analyzing tech markets:

- Watch the talent flow. Do not just react to who wins the contracts; react to where the top safety engineers go. If a mass exodus occurs at companies that capitulate to the Pentagon, the long-term viability of those models in the civilian market is jeopardized.

- Factor in “Compliance Migration” costs. If defense contractors are indeed forced off Anthropic, the financial windfall will not just go to competing AI labs, but to the massive consulting and integration firms (Accenture, Deloitte) tasked with rewiring Boeing and Lockheed’s legacy systems.

The Bottom Line

Anthropic’s Thursday rejection of the Pentagon’s ultimatum is a defining moment in the history of artificial intelligence. By refusing to compromise on mass surveillance and autonomous lethal targeting, the company forced the U.S. government to play its hand against its own defense-industrial base. Auditing Boeing and Lockheed Martin’s reliance on the very system the DoD wants to ban proves that AI integration is no longer a localized software update; it is structural load-bearing infrastructure. The battle over “murder bots” isn’t a theoretical debate set in the future. It is a crisis rapidly unfolding in the logistics networks of 2026, and the outcome will determine whether the intelligence layer of the internet remains a civilian utility or becomes a fully conscripted military asset.

🦋 Discussion on Bluesky

Discuss on Bluesky