Key Takeaways

- The Scale: A single ChatGPT query uses 10x the energy of a Google search. Multiply that by billions, and you break the grid.

- The Bottleneck: It’s not chips; it’s transformers. Utilities cannot build transmission lines fast enough to power new gigawatt-scale data centers.

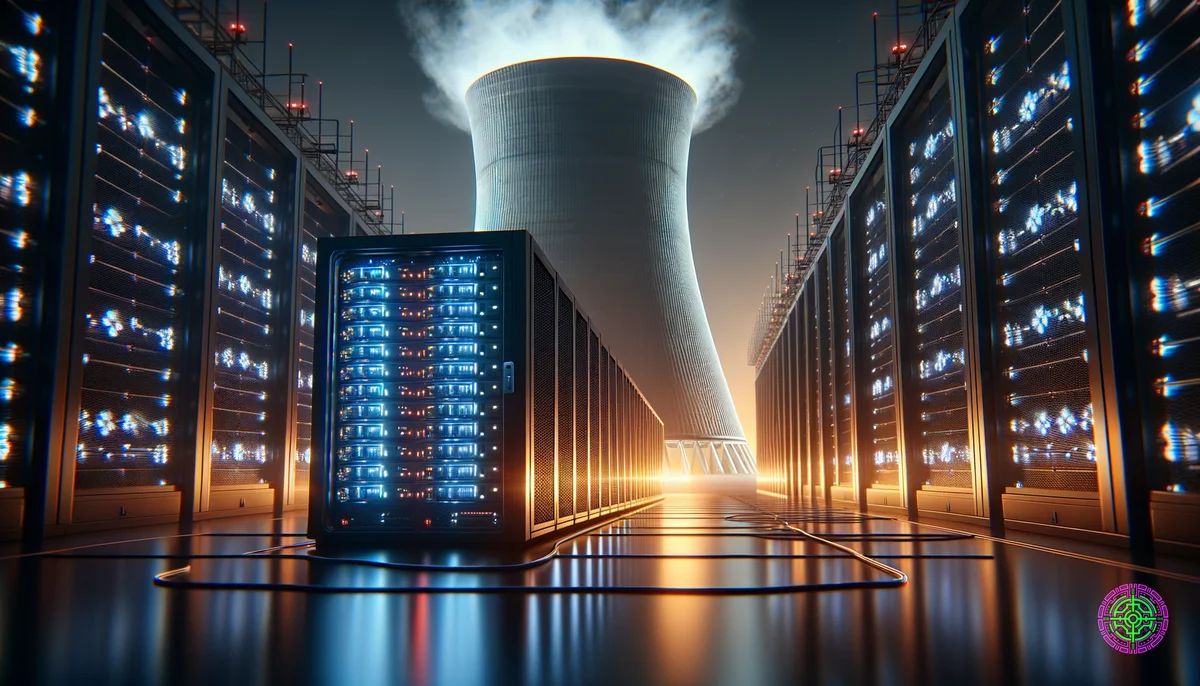

- The Solution: Tech giants are going off-grid. Microsoft and Amazon are investing billions in Small Modular Reactors (SMRs)—mini nuclear plants—to secure their own power supply.

We thought the limit to AI would be intelligence. It turns out, it’s electricity.

In late 2025, the “AI Energy Crisis” is the biggest topic in Silicon Valley. Training a model like GPT-5 requires the energy output of a small city. Running it (inference) requires even more.

Data centers now account for 4% of global electricity consumption, doubling in just three years. By 2030, the IEA predicts this could rise to 8%, consuming more power than Japan.

The Scale of the Problem

To understand the crisis, you have to look at the math.

- Training vs. Inference: Training a frontier model emits as much carbon as 300 trans-Atlantic flights. But that’s a one-time cost. The real drain is inference—every time you ask an agent to “plan my vacation,” thousands of GPUs spin up.

- Water Usage: It’s not just power. Data centers drink water to stay cool. Microsoft’s water consumption jumped 34% in one year, leading to conflicts with local communities in drought-prone areas like Arizona.

The Nuclear Renaissance: Big Tech’s New Bet

Renewables (solar/wind) are great, but they are intermittent. AI needs 24/7 baseload power with 99.999% uptime. Batteries are currently too expensive for this gigawatt scale.

Enter Nuclear.

- Microsoft: Signed a historic deal to restart Three Mile Island (Unit 1) to power its AI cloud. This 20-year PPA guarantees them carbon-free power, regardless of the price.

- Amazon: Bought a data center campus directly connected to the Susquehanna nuclear plant, bypassing the public grid entirely.

- Google: Investing heavily in fusion startups and SMRs (Small Modular Reactors). These “nuclear batteries” can be manufactured in factories and shipped to data center sites, bypassing the decade-long construction delays of traditional plants.

Big Tech has effectively become the new utility sector. They are funding the next generation of clean energy infrastructure because they have no choice. The grid cannot keep up with them.

The Irony: Green AI, Dirty Power

There is a bitter irony here. We are building AI to help us solve climate change (optimizing grids, designing new materials), but the creation of that AI is currently driving up emissions.

- The “Jevons Paradox”: As AI becomes more efficient, we use it more, leading to higher total consumption.

- The Reality Check: Google’s carbon emissions rose 48% in the last 5 years, largely due to AI infrastructure. They have quietly dropped their “Carbon Neutral by 2030” targets, admitting that the AI race takes priority.

The Future: A Bifurcated Grid?

We are heading toward a bifurcated grid: one for the people, and one for the machines.

- The Public Grid: Struggling with aging infrastructure, intermittent renewables, and rising costs for consumers.

- The Private Grid: Powered by dedicated nuclear and geothermal assets, owned by trillion-dollar tech companies, ensuring their AI never sleeps.

The question is no longer “Can we build it?” but “Who pays for the upgrade?” If history is any guide, the consumer will foot the bill for the grid, while Big Tech builds its own fortress of power.

🦋 Discussion on Bluesky

Discuss on Bluesky