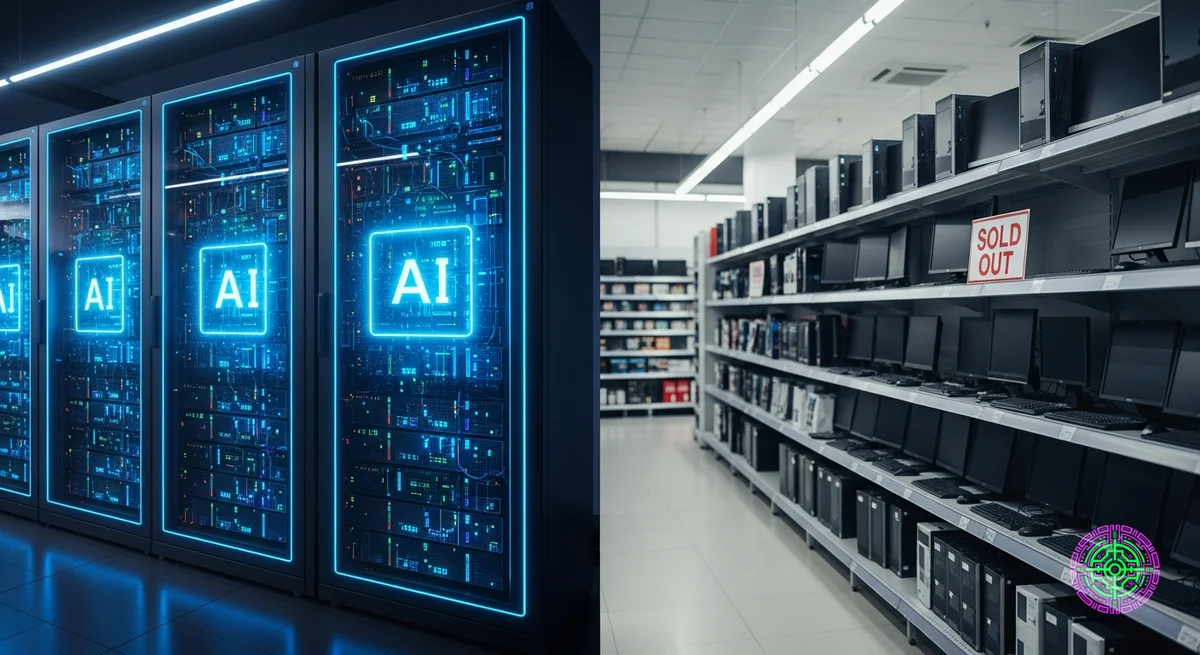

If you’ve tried to build a PC or upgrade your laptop in the last month, you’ve likely noticed something alarming: RAM prices are climbing, and stock is vanishing.

This isn’t just a holiday rush or a supply chain hiccup. We are in the early stages of a structural memory shortage that analysts predict could last until 2027. The culprit? The insatiable appetite of Artificial Intelligence.

The AI Pivot

The root cause is a massive pivot by the world’s leading memory manufacturers—Samsung, SK Hynix, and Micron—away from consumer memory and toward High Bandwidth Memory (HBM).

HBM is the lifeblood of AI accelerators like Nvidia’s Blackwell and Google’s Trillium TPUs. It offers the massive throughput required to train and run large language models. But HBM is difficult to manufacture, requiring complex stacking (TSV) and packaging techniques.

[!NOTE] The Trade-off: A single wafer of HBM consumes significantly more manufacturing capacity than a wafer of standard DDR5. Every fab line converted to HBM is a line taken away from the RAM that goes into your laptop or gaming PC.

The DDR4 Squeeze

The shortage is hitting legacy systems the hardest. Manufacturers are aggressively phasing out DDR4 production to free up capacity for DDR5 and HBM.

This has created a bizarre market inversion:

- DDR4 prices have surged by over 50% in some markets as supply dries up faster than demand.

- DDR5 prices are also rising, but primarily due to raw demand from data centers upgrading their non-AI servers.

According to reports from Tom’s Hardware, major manufacturers are delaying the launch of next-gen memory modules from late 2025 to 2026 because the market simply cannot support them right now.

Consumer Impact

The impact is already trickling down to retail:

- Price Hikes: Expect to pay 30-50% more for RAM kits compared to early 2025.

- Delays: Custom PC builders are facing longer lead times.

- Hardware Postponements: There are rumors that GPU refreshes and SSD availability (which relies on similar NAND production lines) could be impacted.

The Outlook

Don’t expect a quick fix. Building new semiconductor fabs takes years, and manufacturers are hesitant to over-expand after the memory crash of 2023.

As Microchip USA notes, the industry is prioritizing high-margin AI chips over commodity consumer parts. Until the AI infrastructure build-out stabilizes, the humble stick of RAM is going to be a luxury item.

The Bottom Line: If you need RAM, buy it now. The “AI Tax” on hardware is just getting started.

The Technical Bottleneck: Why HBM Eats Wafers

To understand why this shortage is so persistent, we need to look at the silicon level. It’s not just that manufacturers are “choosing” AI; it’s that AI memory is incredibly inefficient to produce compared to standard RAM.

High Bandwidth Memory (HBM) is built by vertically stacking multiple DRAM dies (layers) on top of each other, connected by thousands of microscopic channels called Through-Silicon Vias (TSVs).

- Yield Rates: The complexity of TSV stacking means that if one layer in a stack of 8 or 12 is defective, the entire stack can be ruined. This results in lower yield rates compared to standard planar DDR5.

- Wafer Consumption: A single HBM3e stack can consume 3x to 4x the wafer area of a standard DDR5 module when you account for the logic die and yield losses.

In simple terms: One AI server’s worth of HBM removes enough silicon capacity to build thousands of consumer laptop RAM kits.

Historical Context: The Crypto Déjà Vu

If this feels familiar, it is. In 2017-2018, the cryptocurrency mining boom caused a similar shortage. GPU prices tripled, and DDR4 prices spiked as fabs pivoted to produce GDDR memory for mining cards.

However, there is a critical difference:

- Crypto was volatile: Demand could (and did) crash overnight.

- AI is structural: The demand for AI infrastructure is being driven by trillion-dollar capex plans from Microsoft, Google, Meta, and Amazon. This isn’t a bubble that will pop in six months; it’s a multi-year industrial shift.

Buying Advice: How to Survive the Squeeze

If you are planning a build in late 2025 or 2026, here is your survival guide:

1. Prioritize Capacity Over Speed

For most users, the difference between DDR5-6000 and DDR5-7200 is negligible in real-world gaming. However, the price gap is widening. Stick to the “sweet spot” speeds (currently 6000MT/s CL30) and avoid overpaying for bleeding-edge kits that are most affected by the shortage.

2. Buy Sooner Rather Than Later

If you are sitting on 16GB and thinking about upgrading to 32GB “someday,” make that day today. Spot market prices are leading indicators, and they are already flashing red. Retail prices typically lag by 4-6 weeks, so you have a small window before the full impact hits Amazon and Newegg.

3. Don’t Ignore the Used Market

Unlike SSDs, RAM is incredibly durable. Used DDR4 kits from reputable sellers on eBay or r/hardwareswap are often safe bets. With new DDR4 production plummeting, the used market will soon be the only reasonable source for high-capacity legacy kits.

4. Laptop Users: Check Your Slots

If you are buying a laptop in 2026, beware of soldered RAM. As memory becomes more expensive, manufacturers will likely cut costs by soldering 16GB (or even 8GB) directly to the board to save on connector costs. Always buy the configuration you need upfront, because the era of cheap, user-upgradeable SODIMMs is pausing.

🦋 Discussion on Bluesky

Discuss on Bluesky